The approach to AI has become more nuanced; organisations have moved away from hunting down use cases to clearer prioritisation and a stronger focus on value creation. With this outcome-focused view, it is clear that AI cannot be an isolated initiative.

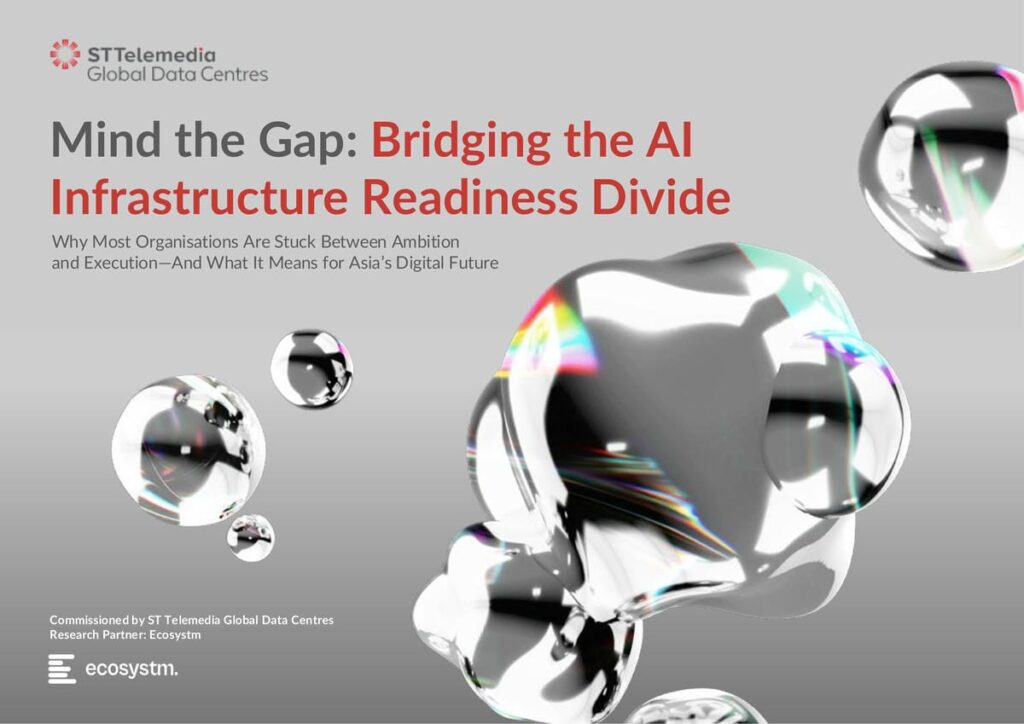

It is in this value creation that the biggest hurdle becomes visible. Across Asia Pacific, organisations are actively experimenting with or deploying newer AI capabilities.

While activity levels are high, the outcomes being achieved remain uneven. Many organisations are building and deploying AI in some form, yet only 10% report measurable business value.

It is well established that organisation-wide AI strategies, supported by sustained investment in skills, infrastructure, and governance foundations, are required to move the needle. However, many technology leaders are still struggling to demonstrate early, visible value that secures broader executive buy-in to enable scale. This challenge is shaped by the environment in which AI operates.

As AI scales, the traditional separation between IT (infrastructure, systems) and data (governance, pipelines, analytics) becomes harder to sustain. Decisions around architecture, data access, compliance, and operations are now tightly interdependent.

What is emerging is a structural shift: AI is driving the convergence of IT and data leadership.

Here are 5 trends that are shaping this transition.

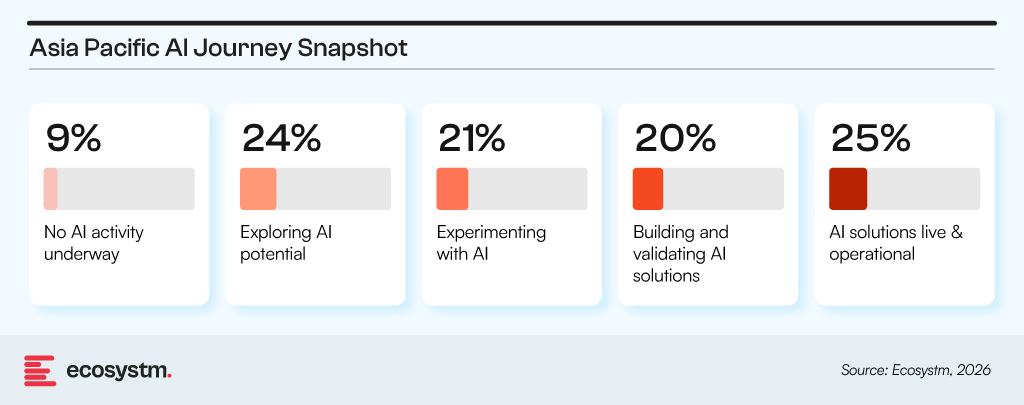

1. Data readiness is now an infrastructure issue

AI deployment is expanding across functions, but data maturity remains uneven.

This challenge is not data management alone.

The constraint lies in how data is structured, connected, and accessed across the enterprise. In many organisations, data is distributed across legacy systems, cloud platforms, and business applications designed independently. A significant share of effort goes into locating, reconciling, and validating data before it can be used for AI workloads.

Access, latency, and reliability are shaped by infrastructure choices: integration design, storage architecture, deployment models, and the level of standardisation across environments. These determine whether data can support real-time AI use cases or remain limited to delayed, partial views.

Responsibility sits across both IT and data functions. Platform architecture, cloud decisions, and integration patterns shape what the data layer can deliver, alongside governance and analytics practices.

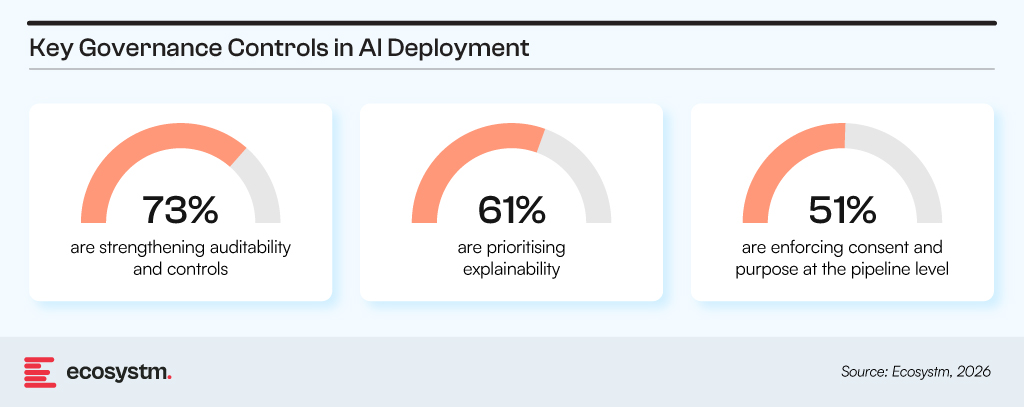

2. Governance is moving into system design

AI is pulling governance closer to where systems are defined and built.

These controls are being implemented inside data pipelines and model workflows, not layered on after deployment.

What is changing is where these decisions sit. Choices around data use, model behaviour, access rules, and retention now shape system design from the outset. Governance is reflected in architecture decisions such as how data is partitioned, how models are trained, and what gets logged or traced.

This shifts coordination across data, security, and platform teams. Governance constraints now form part of design inputs, while architecture determines what compliance and control can realistically be achieved.

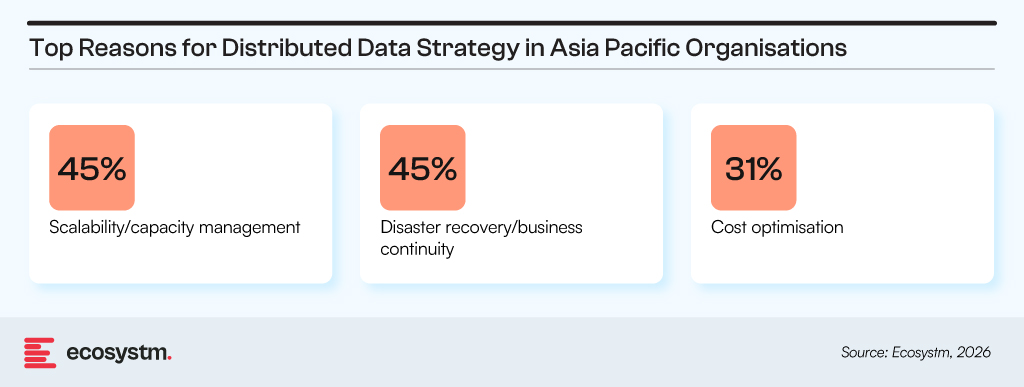

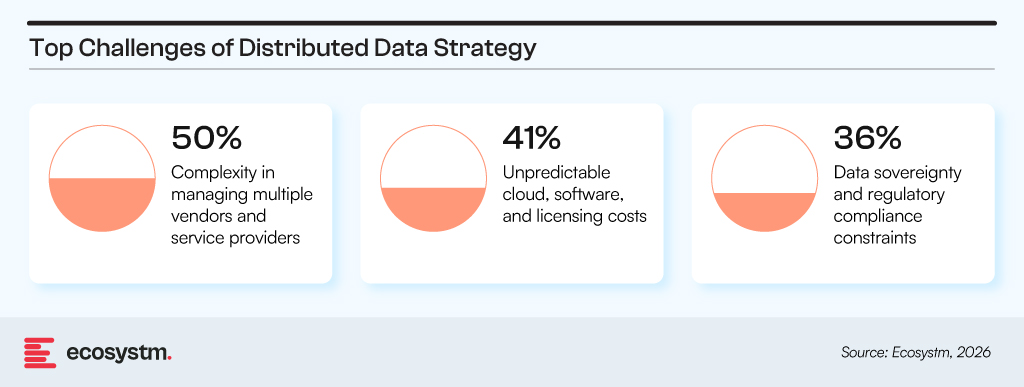

3. Distributed data is creating operational complexity

Organisations are moving toward distributed data models for a few reasons.

However, the reality is that it is hard to achieve.

A structural change is needed in how enterprise data is organised. Data is now spread across on-prem systems, multiple clouds, and edge environments, creating coordination challenges in synchronisation, governance, and observability. This results in not only management complexity but also inconsistency in how data is accessed and maintained across environments.

The impact shows up in duplicated datasets, delayed access, and uneven reliability depending on where the data resides. The core challenge is managing fragmentation while maintaining coherence in how data is handled across environments.

AI systems operate on top of this distributed environment, making their performance dependent on the consistency of data across environments rather than availability within any single one. This makes IT architecture and data operations interdependent by design.

4. AI performance is determined by system design

Technology modernisation priorities reflect a shift toward system-level performance.

These priorities sit at the system level rather than within any single function. However, constraints remain structural, including rising costs across cloud, software, and infrastructure layers, interoperability gaps between platforms and environments, and skills limitations across legacy and modern technology stacks.

These challenges cut across both infrastructure and data domains. Infrastructure teams are responsible for performance and reliability, while data teams manage access, quality, and consistency. In distributed environments, this naturally creates overlap in areas such as data movement, integration, and real-time operations.

To address this, organisations are investing in automation and AI-enabled operations to improve coordination across systems and reduce fragmentation between infrastructure and data functions. Ultimately, AI performance is defined by how infrastructure and data systems are designed and operated as a single, integrated environment.

5. Trust is becoming a cross-functional responsibility

AI adoption is moving ahead of organisational readiness on trust and control.

As AI moves into decision workflows, customer interactions, and operational processes, trust and control become critical.

Trust is not contained within a single function. Privacy, fairness, auditability, and security sit across multiple layers – data pipelines, infrastructure, APIs, and applications. Issues such as bias or leakage rarely originate in one place; they emerge through interactions across these layers.

This shifts accountability across data, infrastructure, security, and application teams. Each influences trust outcomes through design choices, controls, and operational behaviour. Trust outcomes depend on coordination across IT, data, and business functions operating on the same system.

Where Enterprise Systems & Operating Models Meet

Data, infrastructure, and governance decisions are now tightly linked. Choices on architecture affect data access. Data distribution affects infrastructure load. Governance requirements reshape both. As a result, IT and data leadership are working on the same set of problems from different entry points, with overlapping accountability in practice.

Platform approaches help make this manageable by creating a shared layer for access, integration, and control across distributed environments. They reduce duplication of effort and make it easier to maintain consistency across systems that are otherwise operating independently.

But platforms alone do not resolve the underlying issue. The way most organisations are structured still reflects separate ownership of infrastructure, data, security, and applications, while AI systems operate across all of them at once. That gap shows up in execution friction, unclear ownership of outcomes, and difficulty scaling beyond pilots.

Moving from experimentation to sustained value will depend on aligning these two layers: how systems are designed, and how responsibility for them is organised.