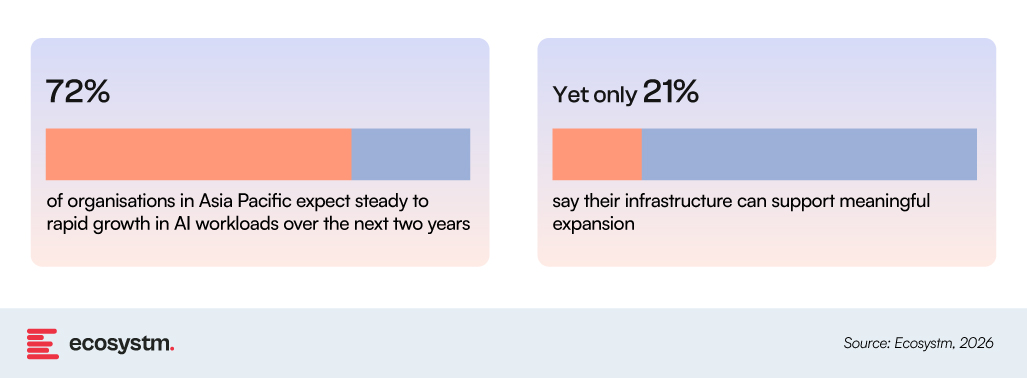

Across Asia Pacific, AI adoption is moving faster than the infrastructure supporting it. Organisations are scaling use cases, but environments remain fragmented across on-prem systems, cloud platforms, and hybrid estates not designed for sustained AI workloads.

This gap between AI ambition and infrastructure readiness is widening.

The pressure is already shaping infrastructure decisions. Instead of broad modernisation programs, organisations are responding in targeted ways, focused on where workloads run, how data moves, and how environments are governed.

A consistent pattern is emerging: AI workloads are being distributed across cloud and on-prem environments, while performance constraints surface across compute, storage, and network layers simultaneously. The challenge is less about individual systems, and more about how these layers behave across distributed architectures.

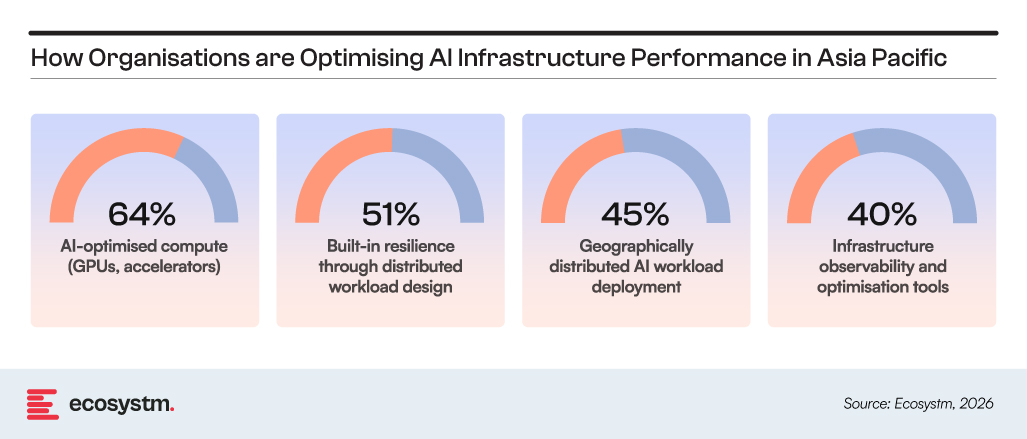

How Organisations are Responding

Investment is shifting away from full-stack replacement toward improving how AI workloads operate across distributed environments, particularly across performance, resilience, and operational visibility.

This highlights a clear direction of travel: organisations are not simply expanding infrastructure capacity, they are re-architecting for performance, distribution, and resilience under AI load.

The combination of high demand pressure, uneven readiness, and targeted optimisation strategies sets the context for what is unfolding across Asia Pacific infrastructure. These patterns are not theoretical. They are drawn from Ecosystm research and validated through conversations with technology leaders across industries and markets in the region.

5 clear infrastructure trends are emerging in the region.

1. Infrastructure decisions are becoming transformation triggers

Infrastructure refresh cycles are increasingly being used to reset cloud and AI strategy.

Infrastructure is now shaping transformation timing, not just supporting it. Licence renewals, virtualisation changes, and platform refresh cycles are forcing organisations to revisit long-standing architecture choices. In many cases, changes in licensing economics are making “stay as-is” harder to justify, particularly in virtualised estates.

As a result, decisions are being driven less by immediate cost optimisation and more by practical trade-offs around flexibility, workload movement, and AI readiness. Even when cloud does not reduce cost upfront, it is being adopted to handle variable AI demand, speed up deployment, and reduce future rework.

Refresh cycles are becoming decision points where organisations actively re-balance on-prem, cloud, and hybrid workloads instead of extending legacy states.

2. Operating models are limiting scale more than technology

Most cloud and AI delays are now coming from operating friction, not platform gaps.

The technology stack is largely available. The constraint is how organisations actually run it day to day. While skills in cloud architecture, data engineering, and systems design remain uneven, the bigger issue is that teams are still structured around older delivery and control models.

Governance, security, and risk teams typically work through structured approval cycles and fixed control frameworks. Engineering teams operate in faster, iterative environments where infrastructure is continuously changing. This mismatch slows deployment, especially for AI workloads that require frequent iteration and data access.

Organisations are starting to restructure delivery into cross-functional product teams, bringing governance, security, and engineering closer to shared workflows rather than sequential approval layers.

3. Hybrid environments are shaped as much by sovereignty as by architecture

Where workloads run is increasingly determined by regulation, not design preference.

Hybrid infrastructure across Asia Pacific is being shaped by data residency rules, cross-border restrictions, and sector-specific compliance requirements. These factors directly influence where data can sit, how it moves, and which workloads can be centralised or must remain local.

This is resulting in environments that are not converging but stabilising into distinct zones. Sensitive data often remains on-prem or within specific jurisdictions, while less sensitive workloads move to cloud platforms. Integration between these environments is happening selectively, not uniformly.

Organisations are building explicit workload placement rules, with sovereignty and compliance now embedded directly into hybrid architecture decisions.

4. Modernisation is funding AI expansion

AI investment is increasingly being funded through infrastructure rationalisation rather than new budget lines.

AI has moved into core infrastructure spending, covering compute, storage, data engineering, and platform readiness. At the same time, IT budgets are under pressure, making new funding difficult without trade-offs.

Modernisation programs are now being used to create that space. Cloud migration, consolidation of overlapping platforms, automation of infrastructure operations, and retirement of legacy systems are being used to release both cost and capacity. Where these programs are more mature, organisations report more automation in operations, better utilisation of existing infrastructure, and faster delivery of new workloads.

AI scale is being funded through cleanup and consolidation of existing infrastructure estates rather than incremental budget increases.

5. Complexity is now the primary scaling constraint

AI scale is being constrained more by fragmented environments than by raw infrastructure capacity.

The issue is not lack of compute or storage, but fragmentation across platforms, inconsistent data pipelines, overlapping tooling, and uneven governance models. These create friction across delivery environments even when underlying infrastructure is sufficient.

This is being amplified by uncoordinated AI adoption, where teams introduce tools and workloads independently of broader architecture decisions. The result is predictable: longer deployment cycles, higher integration effort, reduced visibility across systems, and rising operational cost per workload.

Organisations are now prioritising standardisation of platforms, consolidation of tools, and basic observability across environments to reduce delivery friction before scaling AI further.

Ecosystm Opinion

Across Asia Pacific, the constraint is less about infrastructure scale and more about how consistently distributed environments operate together under AI load. Cloud, on-prem, and hybrid systems are already in place, but the way they connect and behave as one system is uneven.

This is where pressure is now concentrating: data movement across environments, integration across platforms, and execution across teams that operate at different speeds. The issue is not building more infrastructure, but making existing environments work with fewer gaps and less manual effort.

For technology vendors, demand is shifting towards capabilities that reduce this friction: clearer integration across environments, better visibility of workloads and data flows, and simpler ways to manage distributed systems at scale.